(3) RWKV Open Source Development Blog | Substack

Excerpt

10-100x lower inference cost = lower carbon footprint

With the rapidly growing usage of AI models worldwide, and the threat of global warming. The need for a greener AI model to reduce our carbon footprint is more important than ever.

In that regard, we are proud to say that RWKV (the model which our team works on), has been independently benchmarked as the world’s greenest and most energy-efficient AI model/architecture, on a per token output basis, for models of the same param sizes (7B params).

The energy efficiency of the RWKV architecture is derived from the 10-100 times compute efficiency of our linear transformer architecture vs the quadratic scaling of transformer architectures. A benefit we expect to scale better as our models get large

[

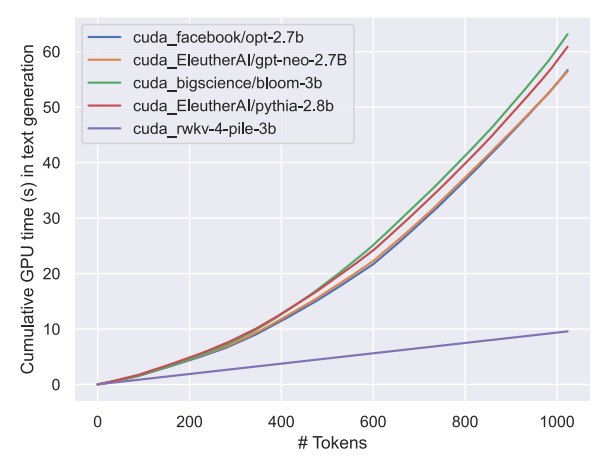

Graph is taken from the RWKV-v4 paper: 5 x Cheaper compute at 1k tokens, 10x Cheaper compute at 2k tokens, 100x+ Cheaper beyond 20k tokens

Combined with how RWKV models scales similarly to transformers in evals, against other models with the same dataset.

The industry wide benefits for scaling more energy efficient architecture, like RWKV, will be significant for our industry as a whole.

Towards a future with more, not less alternatives to AI,

with the various unique benefits each architecture will bring us.

Repost note: This is a repost of a past blogpost, prior to the setup of this blog

Subscribe to RWKV Open Source Development Blog

Development blog for the RWKV open source architecture, and their derivative OSS models