- Reinforcement Learning with Verifiable Rewards was established and popularised across papers including Tülü 3 (from AI2), DeepSeek-R1, DeepSeek-Math, and Reinforcement Learning with Verifiable Rewards Implicitly Incentivizes Correct Reasoning in Base LLMs

Papers

- Deep reinforcement learning from human preferences

- Direct Preference Optimization Your Language Model is Secretly a Reward Model - DPO

- Group Robust Preference Optimization in Reward-free RLHF - GRPO

- Reinforcement Learning with Verifiable Rewards Implicitly Incentivizes Correct Reasoning in Base LLMs

- Illustrating Reinforcement Learning from Human Feedback (RLHF)

- Is Feedback All You Need Leveraging Natural Language Feedback in Goal-Conditioned Reinforcement Learning

- Learning to summarize from human feedback

- Playing Atari with Deep Reinforcement Learning

- Scaling Laws for Reward Model Overoptimization

- Training language models to follow instructions with human feedback - InstructGPT

Surveys and Reviews

- Understanding Reinforcement Learning for Model Training, and future directions with GRAPE

- LLM Post-Training A Deep Dive into Reasoning Large Language Models

Implementation

- OpenRLHF docs - not very clean or readable according to Duarte; Patrick says Ray is a pain in the ass (authors present at RayCon so def. using Ray)

- Gymnasium Documentation and Gymnasium repo - An API standard for single-agent reinforcement learning environments, with popular reference environments and related utilities (formerly Gym)

- a maintained fork of OpenAI’s Gym library

Resources

- Understanding Reinforcement Learning for Model Training, and future directions with GRAPE

- ✨ DeepMind x UCL RL Lecture Series - Introduction to Reinforcement Learning [1/13]

- …and other parts in 13-part lecture series

- Large language models (NLP817 12) by Herman Kamper:

- ✨ RLHF book by Nathan Lambert: Reinforcement Learning from Human Feedback: A short introduction to RLHF and post-training focused on language models.

- ✨ Reinforcement Learning An Overview - Reinforcement Learning book by Kevin Murphy

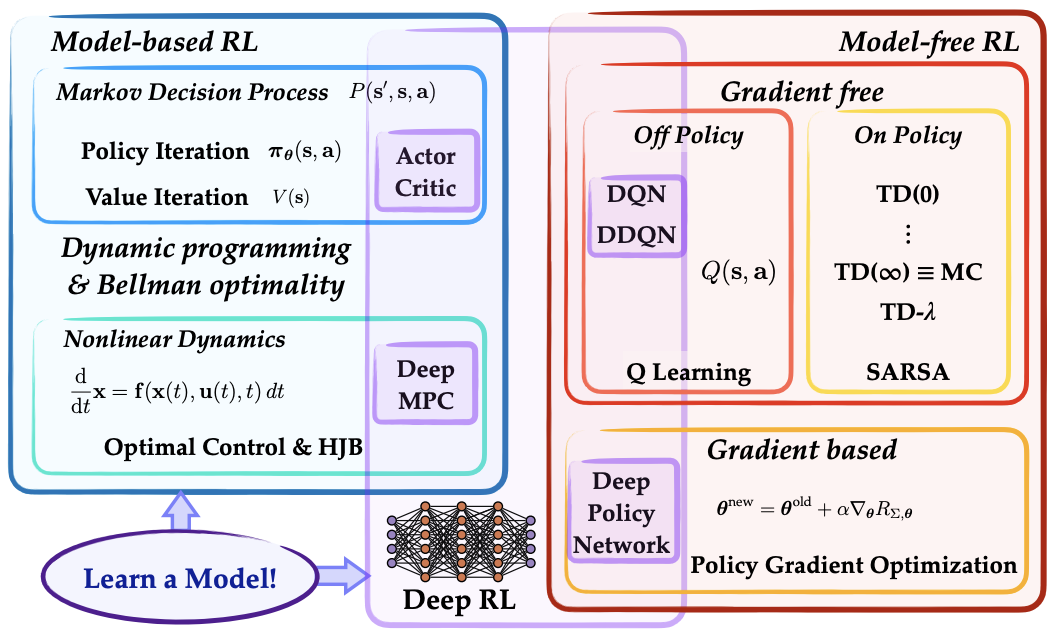

- Reinforcement Learning (Data Driven Science & Engineering - Chapter 11) by Steven L. Brunton and J. Nathan Kutz

- Foundations of Deep RL lecture series by Pieter Abbeel

- Policy Gradient Algorithms - post by Lilian Weng

- ✨ Reinforcement Learning Lecture Series by by Steve Brunton - follows the book chapter above

- DeepMind x UCL | Introduction to Reinforcement Learning 2015 - 10 instalment lecture series by the one and only David Silver

- Reinforcement Learning: An Introduction (2015) by Richard S. Sutton and Andrew G. Barto

- Multi-Agent Reinforcement Learning: Foundations and Modern Approaches (2024) by Stefano V. Albrecht, Filippos Christianos, Lukas Schäfer. MIT Press

- OpenAI Spinning Up

- MIT 6.S091: Introduction to Deep Reinforcement Learning (Deep RL) by Lex Fridman - link

- Deep Reinforcement Learning Course - HF 🤗 course

- backed by the Hugging Face Deep Reinforcement Learning Course repo

- chapter 1 “clipped” is from an older version: An Introduction to Deep Reinforcement Learning

- An Intuitive Explanation of Policy Gradient — Part 1 REINFORCE

- Posts by Nathan Lambert on (i.e. tagged with) RLHF: https://www.interconnects.ai/t/rlhf

# Reinforcement Learning from Human Feedback by Nathan Lambert

A short introduction to RLHF and post-training focused on language models. 👉 https://rlhfbook.com/

- Introductions

- Problem Setup & Context

- Optimization Tools

- Advanced

- Open Questions

See also