Jack Parker-Holder

Excerpt

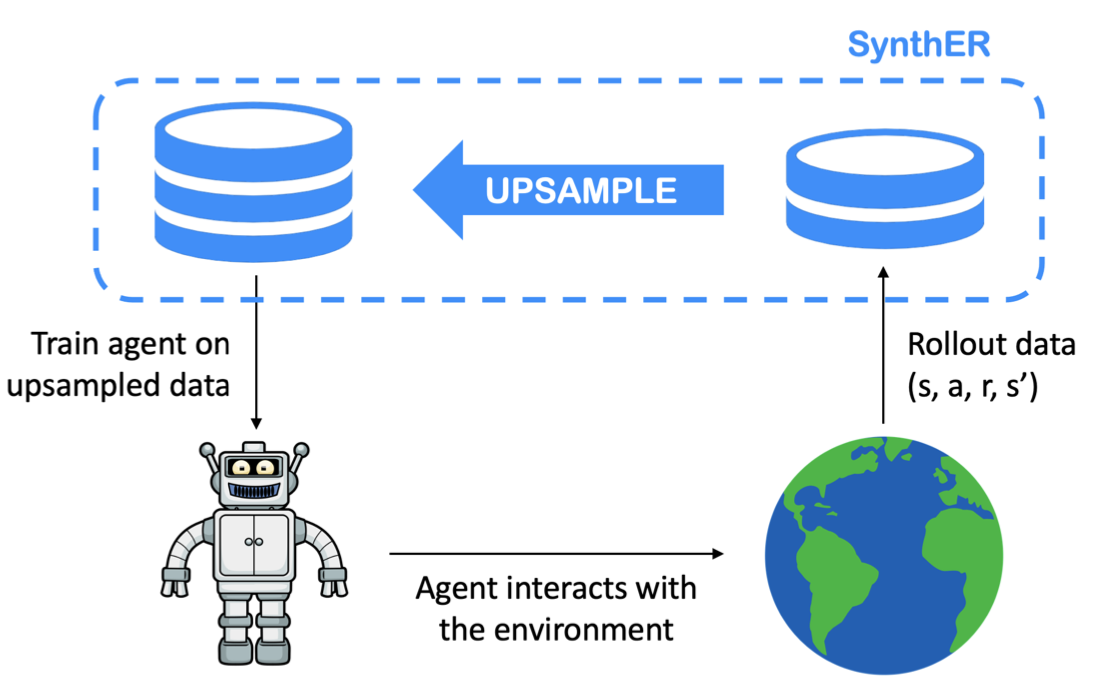

| Synthetic experience replay |

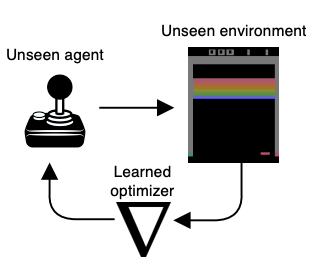

| Discovering General Reinforcement Learning Algorithms with Adversarial Environment Design |

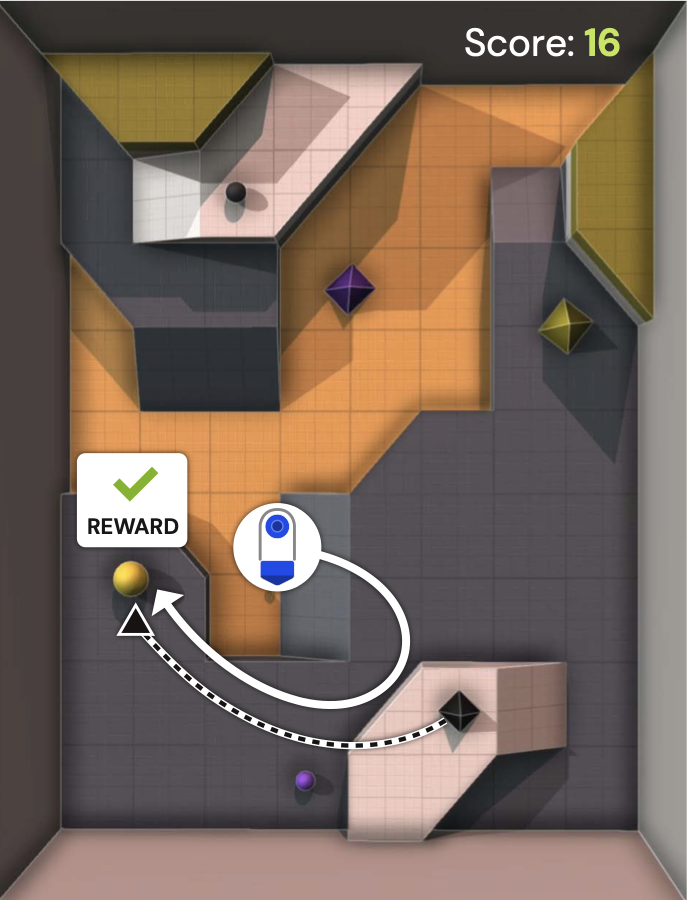

| Human-Timescale Adaptation in an Open-Ended Task Space |

| MAESTRO: Open-ended environment design for multi-agent reinforcement learning |

| Learning Genneral World Models in a Handful of Reward Free Deployments |

| Evolving Curricula with Regret-Based Environment Design |

| From block-Toeplitz matrices to differential equations on graphs: towards a general theory for scalable masked Transformers |

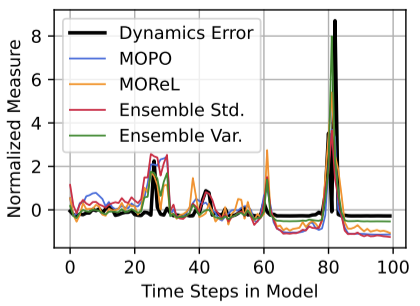

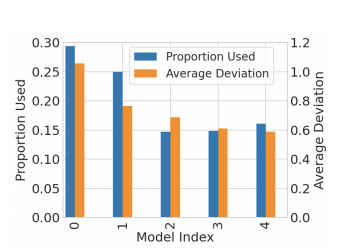

| Revisiting Design Choices in Offline Model Based Reinforcement Learning |

| Towards an Understanding of Default Policies in Multitask Policy Optimization |

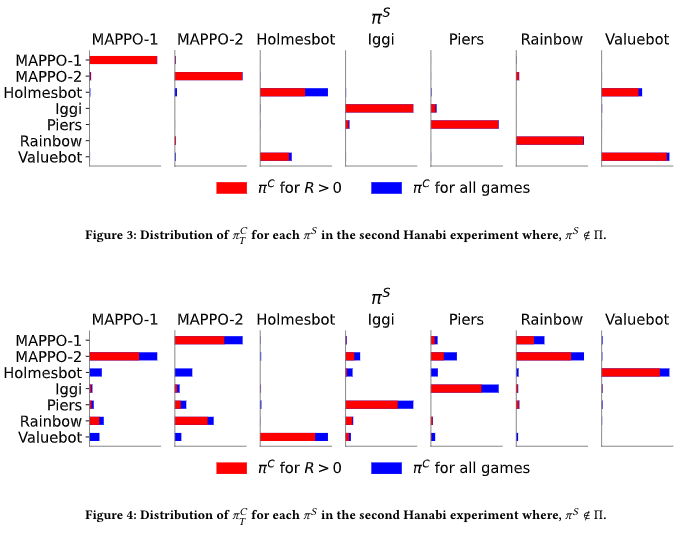

| On-the-fly Strategy Adaptation for ad-hoc Agent Coordination |

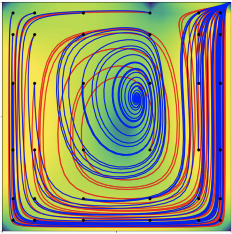

| Lyapunov Exponents for Diversity in Differentiable Games |

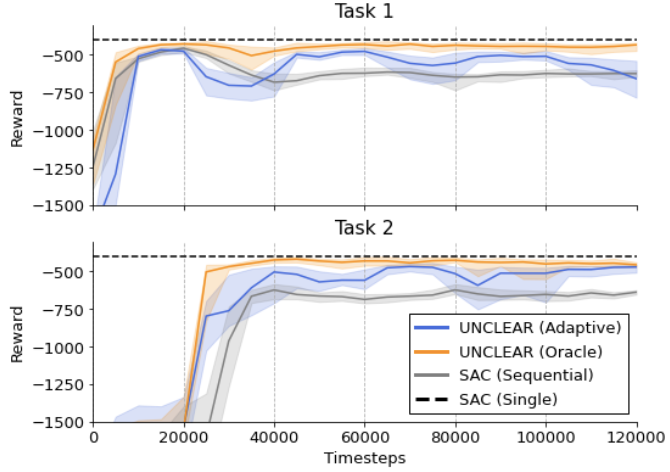

| Same State, Different Task: Continual Reinforcement Learning without Interference |

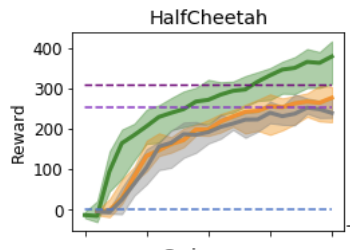

| Tuning Mixed Input Hyperparameters on the Fly for Efficient Population Based AutoRL |

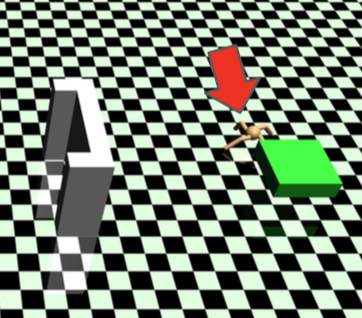

| Replay-Guided Adversarial Environment Design |

| Tactical Optimism and Pessimism for Deep Reinforcement Learning |

| MiniHack the Planet: A Sandbox for Open-Ended Reinforcement Learning Research |

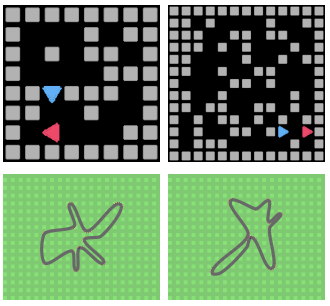

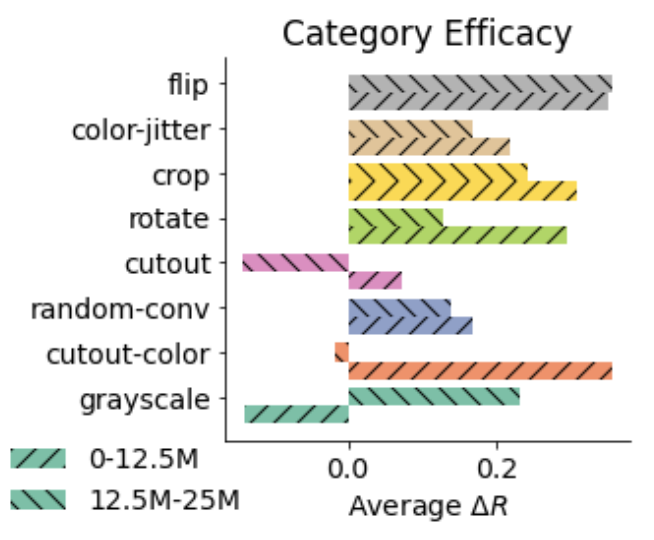

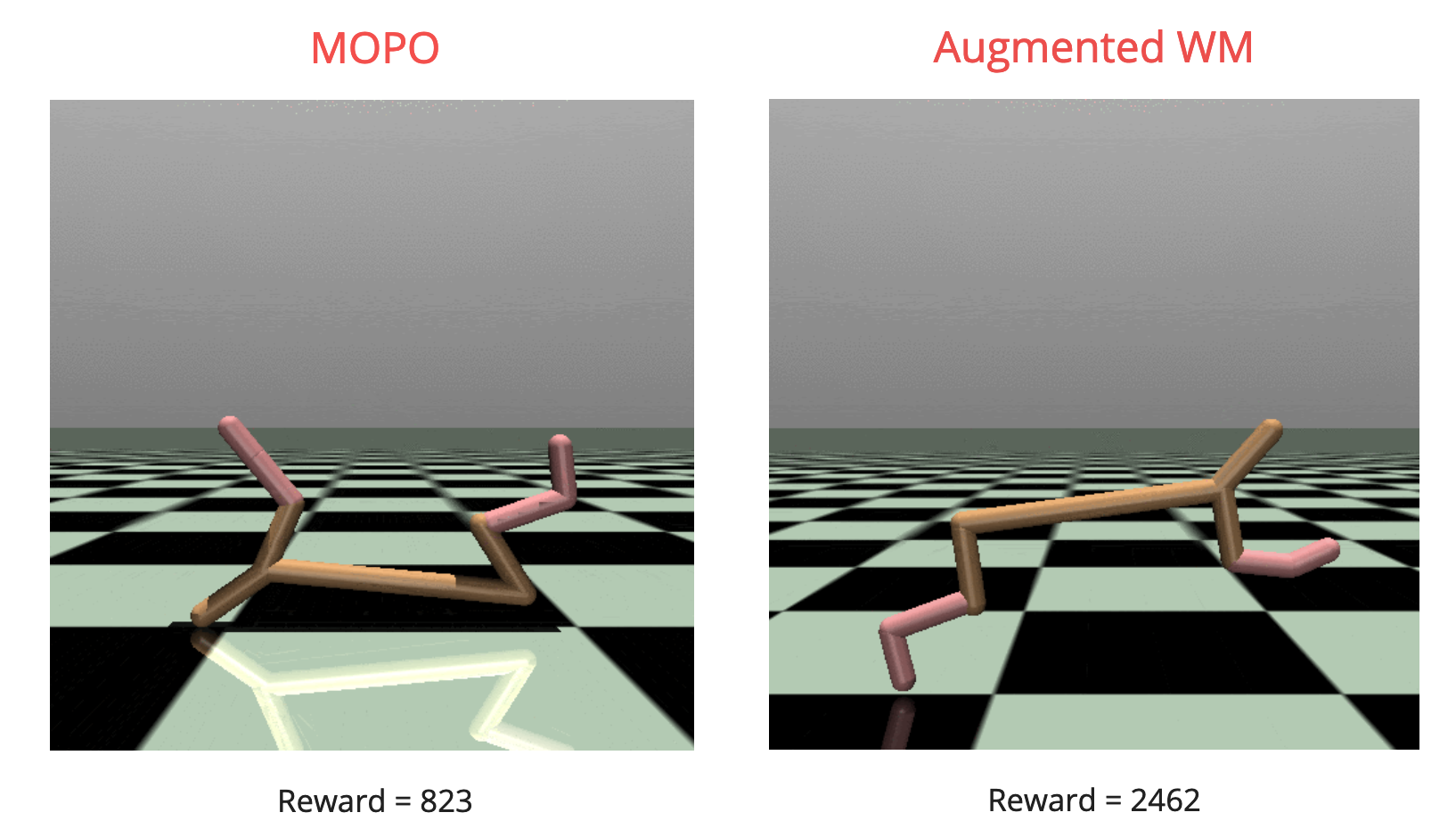

| Augmented World Models Facilitate Zero-Shot Dynamics Generalization From a Single Offline Environment |

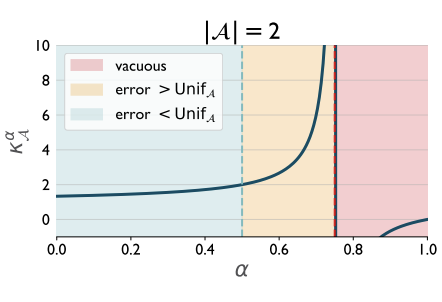

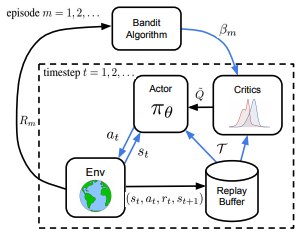

| Towards Tractable Optimism in Model-Based Reinforcement Learning |

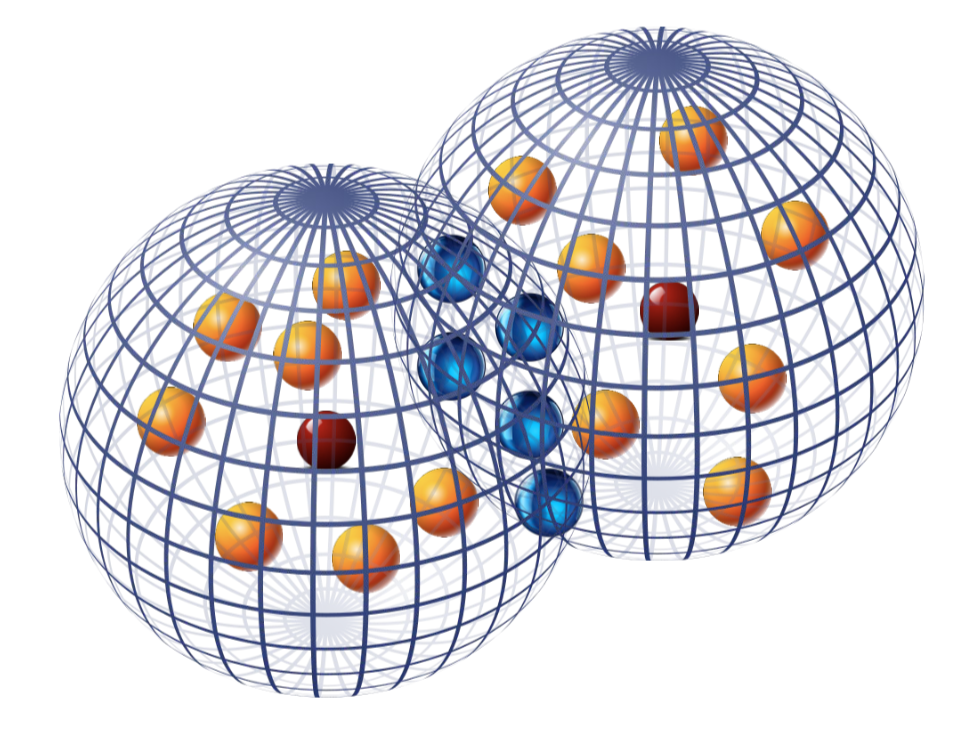

| Effective Diversity in Population-Based Reinforcement Learning |

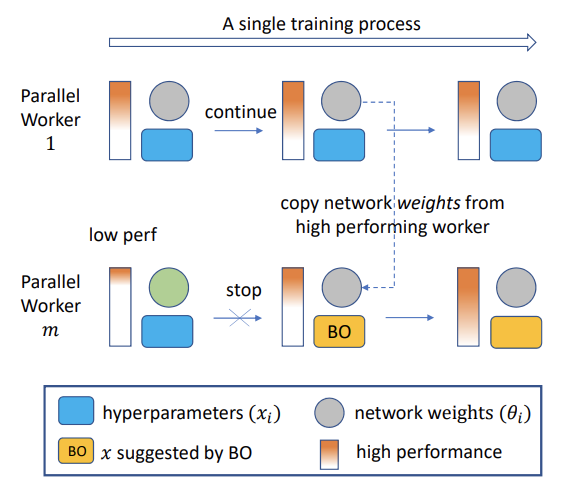

| Provably Efficient Online Hyperparameter Optimization with Population-Based Bandits |

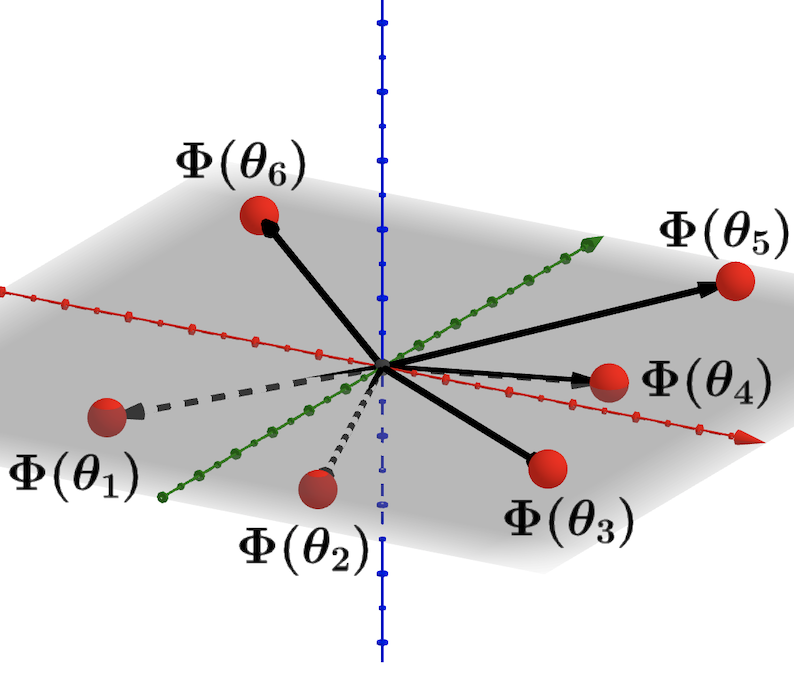

| Ridge Rider: Finding diverse solutions by following eigenvectors of the Hessian |

| Ready Policy One: World Building Through Active Learning |

| Learning to Score Behaviors for Guided Policy Optimization |

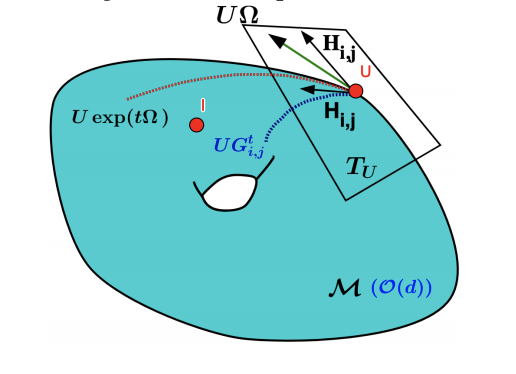

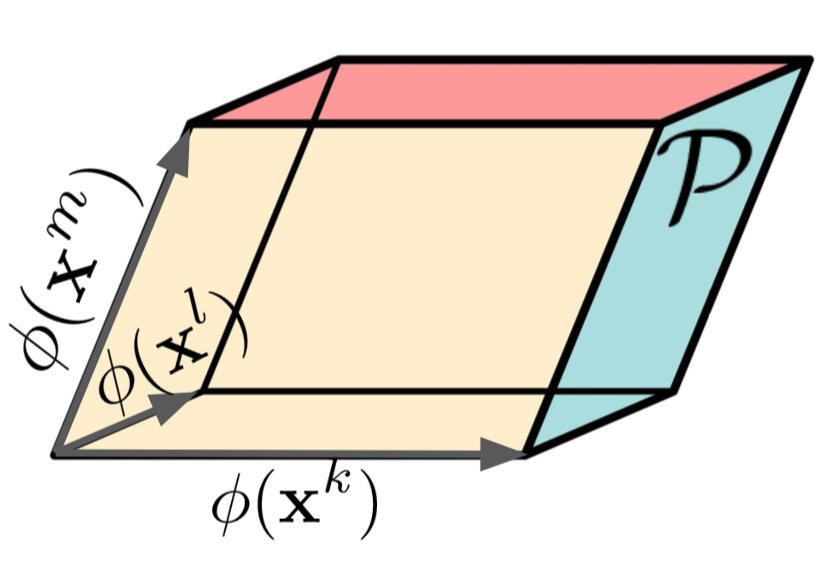

| Stochastic Flows and Geometric Optimization on the Orthogonal Group |

|

|

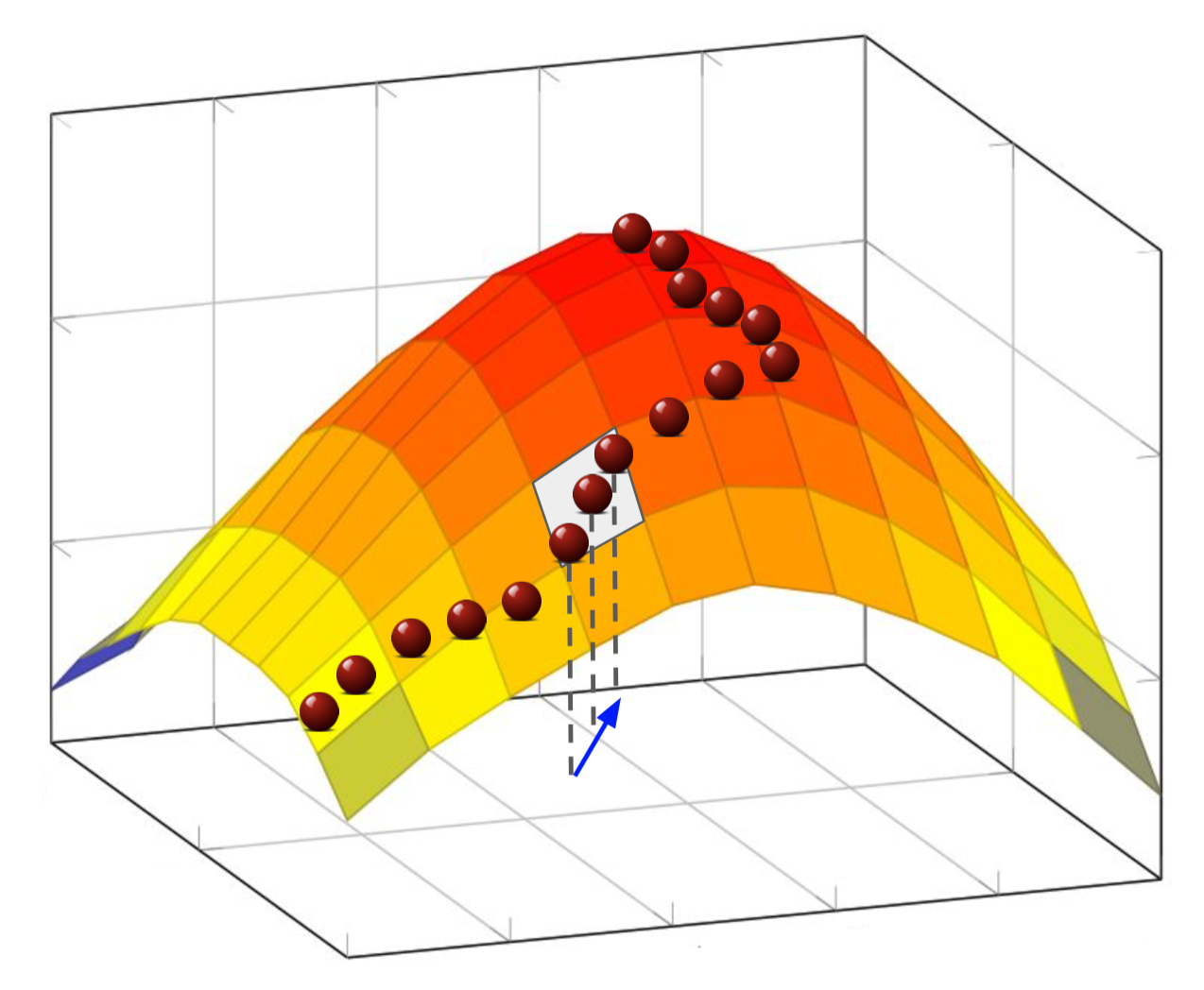

| From Complexity to Simplicity: Adaptive ES-Active Subspaces for Blackbox Optimization |

| Provably Robust Blackbox Optimization for Reinforcement Learning |